Meet the engineers behind the loudest tech of the decade.

If one phrase dominates business conversations in 2026, it is Generative AI. Every executive wants a slice of it, every startup pitches it. But inside LeverX, the Data Science unit led by Vitali Usau approaches the buzz with measured humor.

This is their story. Scattered across different countries, the team has become LeverX’s sober and pragmatic voice in a sea of AI excitement.

Meet the Team

Vitali Usau, Head of Innovation and Mobile Department. A LeverX veteran, he has grown from an iOS developer in 2010 to the Head of the Innovation and Mobile Department today, where he leads multiple teams and drives R&D initiatives.

Ilya Liatokha, Data Scientist. Engineer of production-grade sales forecasting models for firms with $1B+ in revenue and developer of graph neural networks for accurate chemical property estimation.

Yauheni Kachan, Data Scientist. A practitioner of data science across domains — from cancer detection to AI systems that enhance retail performance and accelerate scientific discovery.

Aliaksandr Radzivonau, Data Scientist. Developer of large-scale text classification pipelines and a keen mind in multi-agent systems, he explores the potential of LLMs and working robots.

What many at LeverX still call the “R&D department” was never a formal unit. It began as a small lab of engineers testing blockchain, IoT, and early data analytics to spark client imagination.

By 2017, the ad hoc demos had matured into a dedicated Data Science team. Together with iOS and Android squads, they evolved into the Innovation and Mobile department, where mobile craftsmanship meets AI exploration.

And it’s precisely that tension, between experiments and delivery, that shapes everything they build.

Pragmatism Over Hype

“Generative AI is exciting,” Vitali says, “but no model writes production-ready code without supervision. We design systems that are LLM-agnostic. They are flexible enough to swap between models as they evolve. That is the only way to survive the pace of change.” And yes, the ground keeps shifting constantly.

The LeverX team has seen many hype cycles come and go. In 2020, it was computer vision; later, conversational bots; now, generative assistants. Each wave leaves behind useful tools, but rarely the silver bullet promised on stage. The harder question is always the same: which trends matter?

For some, the answer is restraint. Sometimes, says Yauheni, the most valuable thing their team delivers is an honest answer:

“AI isn’t needed here.”

Still, the developers agree with the fascination, because the tech is clearly groundbreaking.

Aliaksandr, who grew up reading Asimov’s sci-fi prose, now sees bits of that future coming true. “AI isn’t Skynet,” he says. “It’s more like T9 on steroids. But it’s already changing a lot.”

Ilya describes the evolution of their teamwork: “We began with classical machine learning: time series forecasting, anomaly detection, things you could explain with equations. Now, generative AI dominates every client conversation. Everyone wants in. Our challenge is distinguishing where it truly helps from where it’s just hype.”

While social media shows off AI tools that promise full apps in just a few hours, LeverX developers focus on making sure the code can handle real traffic.

As Vitali says,

“Clients love quick wins. A sleek, vibe-coded UI looks great, but it’s not a product. Give it 5,000 users, and it will fall apart.”

And so the question naturally follows: where do they see lasting momentum?

The Future of Tech

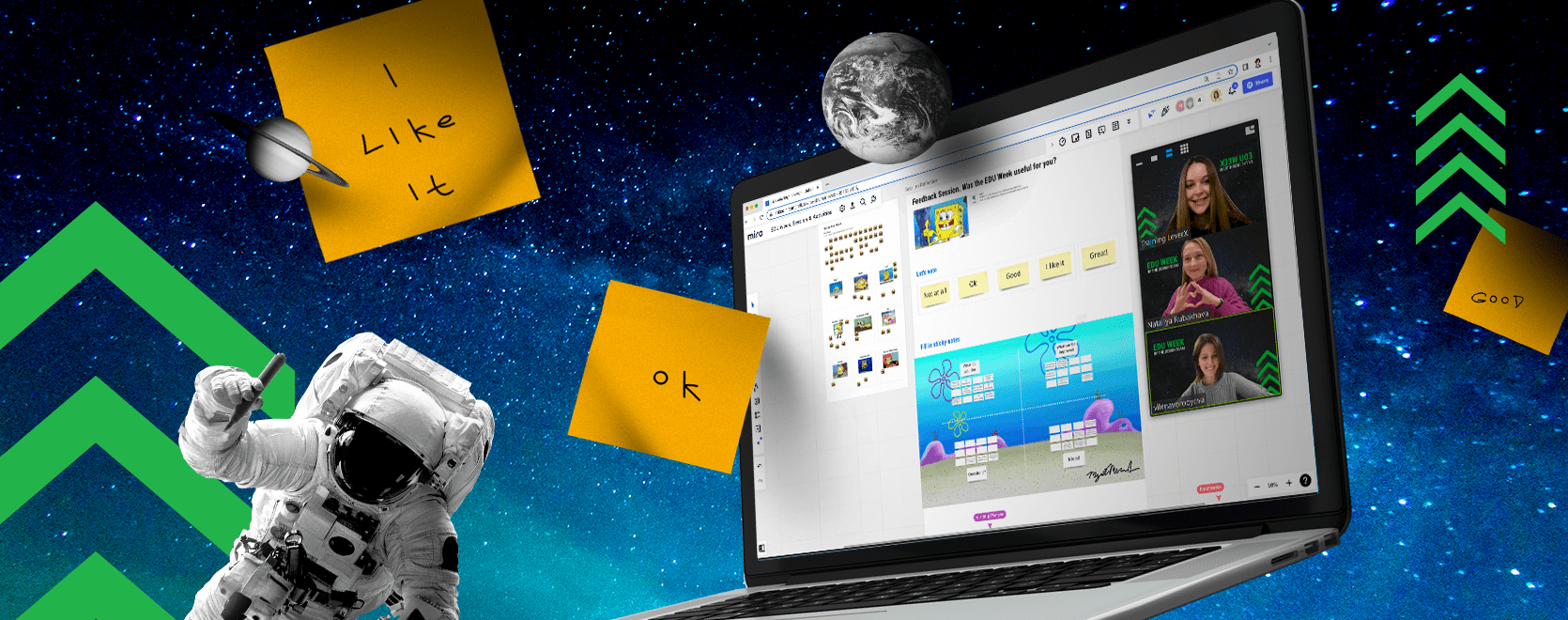

The group is most optimistic about MCP servers — protocols that let multiple AI agents coordinate tasks. They already use them for code review, with one model checking another’s output.

“It’s entertaining to watch models argue about syntax,” says Aliaksandr, “but it also hints at how software development could scale in the future.” And alongside this multi-agent future, another revolution is happening quietly.

What’s flying under the radar is robotics.

"While you’re busy watching TikTok avatars, humanoid bots are running on sand and serving drinks at conferences. That’s real. That’s coming," Vitali warns.

The innovation team moves constantly between moonshot prototypes and enterprise-grade systems.

Fun with Crickets and Robots

The group’s project portfolio veers from the ambitious to the surreal. Aliaksandr recalls an attempt to build a DIY LiDAR scanner using only an iPhone camera, hoping to churn out 3D models for designers.

Yauheni remembers a client request to use AI for real-time cricket counting — yes, the insects. The use case was alternative protein supply chains.

“That was one of the strangest, most incomprehensible projects we’ve ever had,” he says.

Of course, beneath the bold ideas, there are the tools that make them real.

The Tools Behind the Curtain

When it comes to tools, the team’s preferences differ, but some patterns emerge.

-

ChatGPT & Gemini. All paths converge here — whether for quick drafts, context expansion, or offloading repetitive code logic.

-

ChatGPT Deep Research. A heavier version geared toward multi‑step research tasks, synthesizing information with citations.

-

Claude. Though many dislike Claude Code, Vitali finds it quite useful. It’s not a polished IDE by any means, yet it reliably solves problems.

-

PyTorch. To build and train a neural network from scratch.

-

Scikit-learn + SciPy. The classic stack for structured ML (feature engineering, classic algorithms, statistical methods) — simple, explainable.

Vitali notes: “Tools evolve; performance metrics shift. What keeps the pipeline alive is adaptability.”

Too often, he observes, users treat AI as a vending machine. Better, he argues, to offer full context, supplemented with images if needed, and treat the system as a colleague, capable of learning and refining over time.

But staying adaptable also depends on knowing where to look for the right signals in a noisy field.

Favorite Resources for Keeping Up with AI

-

AlphaSignal (newsletter). A highly curated AI newsletter that delivers 5-minute email summaries of major developments, models, and research. It aims to filter noise, so you don’t have to scroll through X or RSS endlessly.

-

ODS.ai / Open Data Science (community). A data science community and platform hosting talks, blogs, conference tracks, and technical content. It carries that deep “old-school” approach.

-

Two Minute Papers (YouTube channel). The Two Minute Papers channel helps distill recent scientific and technical research into bite-sized videos.

Together, these sources offer direction, though they naturally leave room for different views within the team.

Where Minds Meet and Diverge

Throughout interviews, several points of consensus and tension emerged:

-

Consensus: Generative AI is the core frontier. The team agrees that LLMs will remain central to enterprise projects for the foreseeable future.

-

Divergence: Vibe-coding splits opinion. Vitali views it as overhyped, while other team members see merit in lowering entry barriers, even if prototypes rarely scale.

-

Shared caution: Everyone stresses that AI is not a turnkey replacement for expertise. As Aliaksandr warns, “Soon not knowing how to use LLMs will be like a 1990s accountant not knowing Excel.”

These debates naturally lead to a bigger question…

What Comes Next

Asked about the next three to five years, Ilya envisions robust “code-assistants” that can reliably replace junior-level tasks while preserving context across massive repositories. Eugene imagines an AI that can instantly strip the filler out of bloated research papers: “Press a button, and the nonsense disappears.”

Vitali takes a broader view. To him, these shifts mark an epochal turning point:

“Much like the industrial revolution in manufacturing replaced handwork with automated assembly lines, the software development lifecycle will shift from manual execution to intelligent orchestration. This transformation will free human experts from repetitive tasks and allow them to focus on higher-level strategy, innovation, and oversight.”

.png)

.png)

.png)

.png)